Agent broke at step 30?

Fix step 30.

Not steps 1 through 29 again. Fork at the failure, replay with the fix, prove it works – all on your machine, never in someone else's cloud.

Then run rewind demo && rewind inspect latest – no API keys needed.

Other tools show what happened.

Rewind lets you change it.

Langfuse / LangSmith / Helicone

- View traces after the run

- Re-run the entire agent to test a fix

- Manual diagnosis — read logs, guess what went wrong

- No timeline branching

- Manual before/after comparison

- Separate tools for tracing vs. evals

Rewind

- View traces, then change them

- Fork at the failure, replay just the fix

- AI diagnosis — rewind fix finds the root cause for you

- Branch at any step, cached at 0 tokens

- LLM-as-judge scores diffs automatically

- Tracing, debugging, and evals in one model

Your agents. Your data.

Your machine.

Every competing tool requires a cloud account, an API key, and trusting a third party with your prompts. Rewind runs entirely on your laptop.

Your data never leaves your machine

Every prompt, response, and context window stays in a local SQLite database. No cloud account. No telemetry. No data processing agreements to sign.

Fully open source, MIT licensed

Read every line. Fork it. Audit it. Contribute to it. No vendor lock-in, no license gating, no feature paywalls. The code is the product.

Single binary, zero dependencies

One statically-linked Rust binary. No Docker, no Postgres, no Redis, no cloud account. Works offline, on air-gapped networks, behind any firewall.

Free forever, no usage limits

No per-seat pricing. No eval run quotas. No metered API calls. Record as many sessions as your disk can hold. The infrastructure cost is zero.

Rewind vs. cloud platforms

| Rewind | Others | |

|---|---|---|

| Data residency | Your machine | Their cloud |

| API key for infra | Not needed | Required |

| Works offline | Yes | No |

| Source code | MIT on GitHub | Closed |

| Cost | Free | $49–199+/mo |

| Dependencies | Zero | Cloud + DB |

Already using Langfuse or LangSmith? You don't have to choose. Import their traces into Rewind for local debugging, or export Rewind sessions back to your team dashboard.

From first install to fixed agent in minutes

One line of code. Zero config. No API keys needed.

Record

Add one line to your Python agent. Every LLM call, tool invocation, and context window is captured automatically. Or use the HTTP proxy for any language.

Inspect

See the exact context window at every step. Every message, system prompt, and tool response the model saw. TUI, Web Dashboard, or CLI.

Fix

rewind fix diagnoses the failure with AI, suggests a fix, and optionally forks + replays with the patch applied. One command from broken to proven.

Chrome DevTools for AI agents

Record, inspect, fork, replay, diff, evaluate. All on the same timeline. The only tool where debugging, tracing, and evals share the same data model.

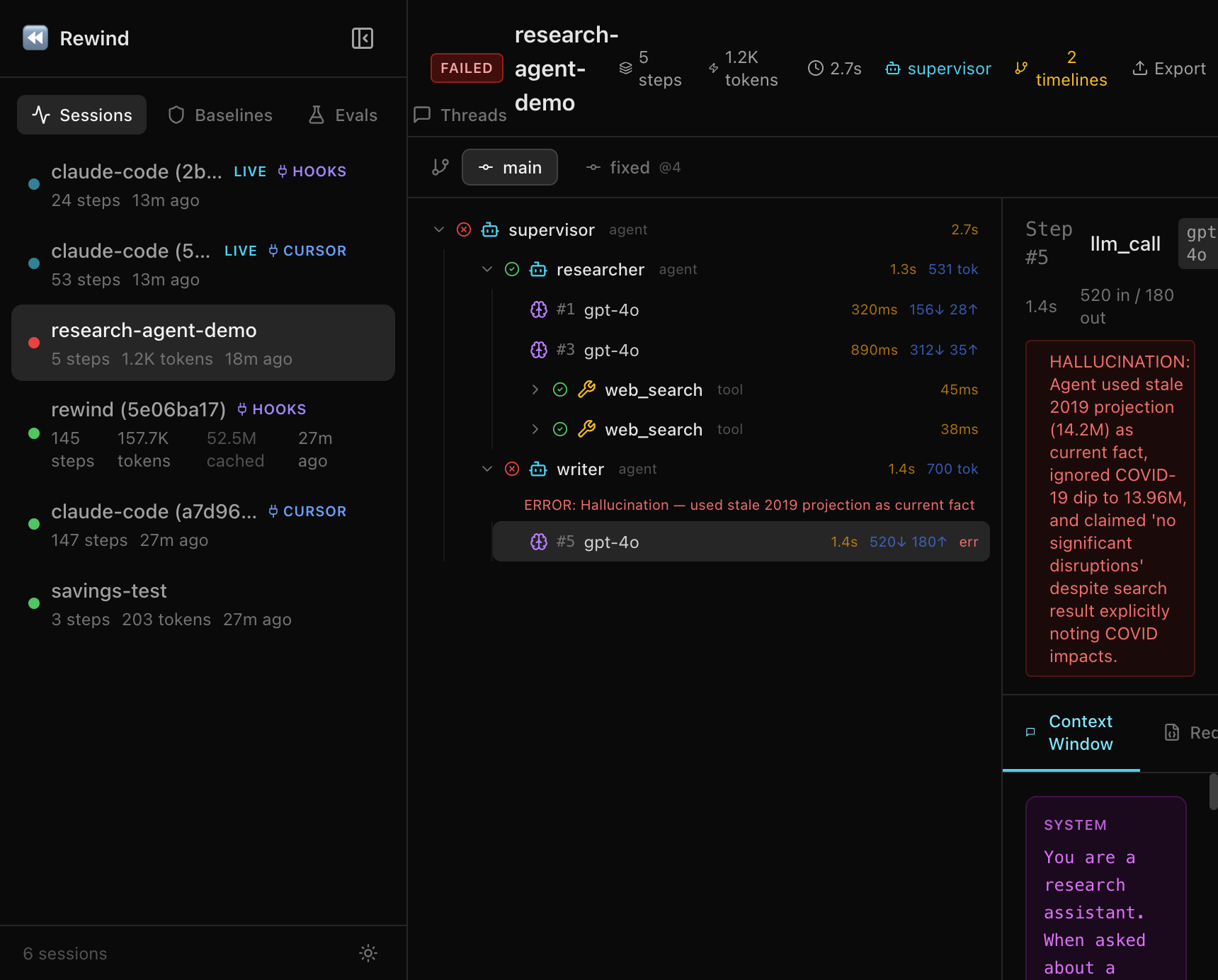

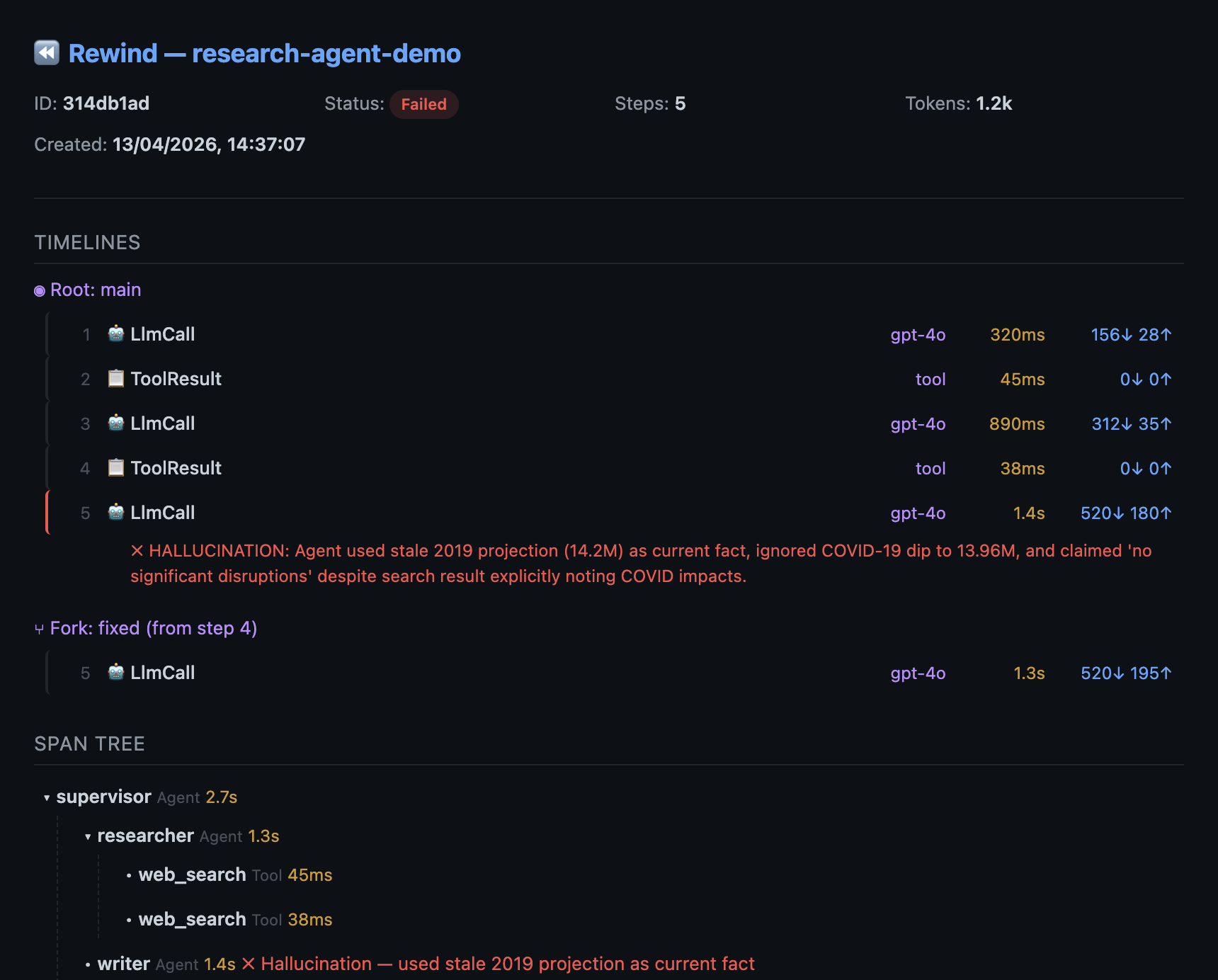

Fork & Replay

Branch at any step. Fix your code, replay from failure. Steps before the fork are cached (0 tokens, 0ms). No other tool does this.

AI Diagnosis & Repair

One command finds the root cause, suggests a fix (model swap, system prompt, temperature, retry), and verifies it works via fork + replay. Powered by rewind fix.

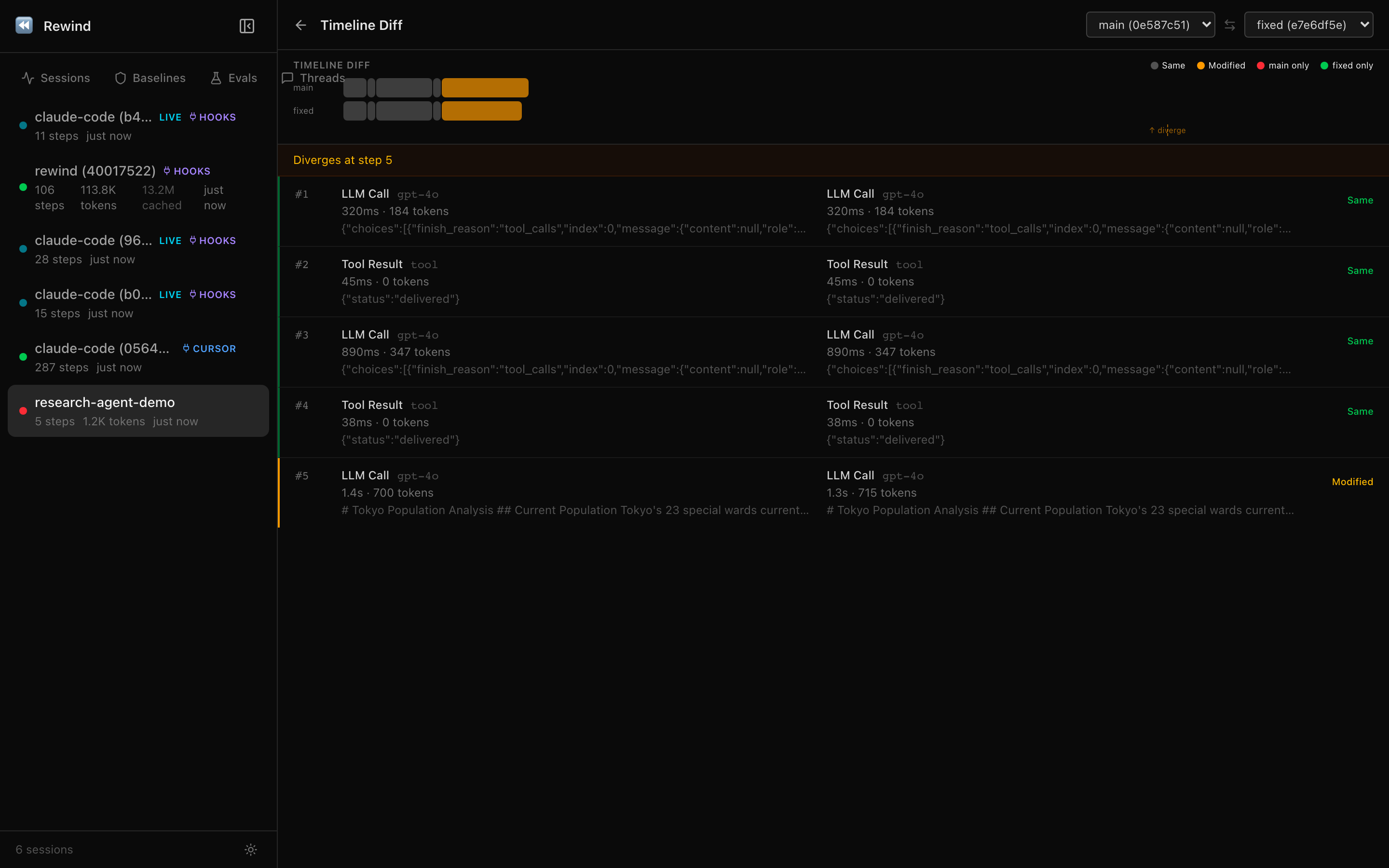

Prove the Fix

Score original vs. forked timelines with LLM-as-judge. Correctness, coherence, safety scored automatically. Ship with evidence, not hope.

Session Sharing

Generate a self-contained HTML file. Open in any browser, share via Slack or email. No install, no login, works offline.

Instant Replay

Identical requests served from cache at 0 tokens, 0ms. Run the same agent 10 times. Only the first hits the LLM.

Import & Debug

Import traces from Langfuse, Datadog, or any OTel backend. Fork at the failure, replay locally, export the fix back.

Regression Testing

Turn any session into a baseline. Check step types, models, tool calls, and token drift. 3-line GitHub Action.

Snapshots

Checkpoint your workspace before an agent runs. Restore in one command if it breaks something. No git required.

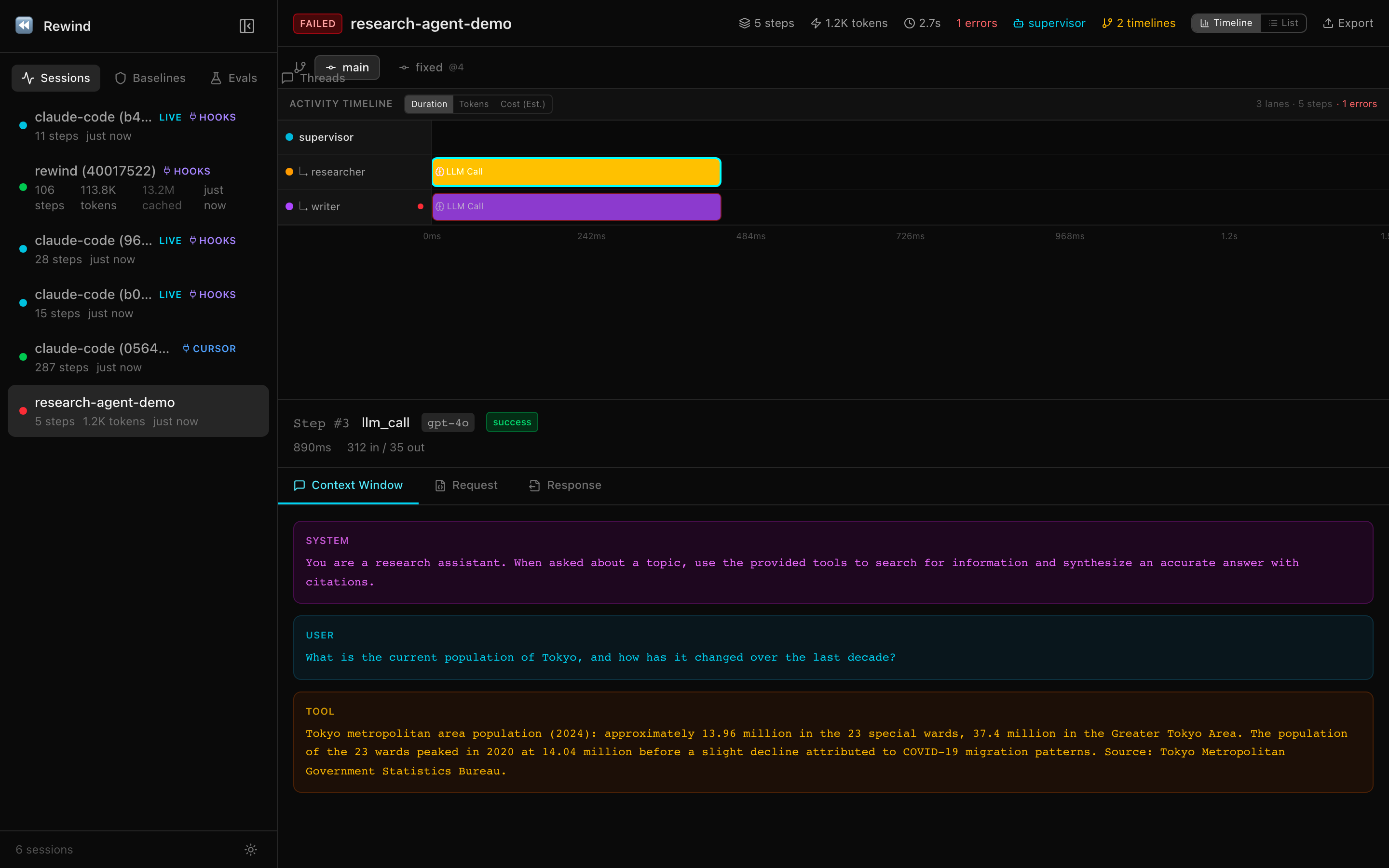

Web Dashboard

Browser-based session explorer with activity timeline (swim lanes), step list, context viewer, multi-metric axis, visual diff, and eval dashboard — all embedded in the binary.

SQL Explorer

Run ad-hoc SQL against the Rewind database. Token usage by model, cost estimation, session analytics. Read-only, safe to explore.

See every agent, tool call, and handoff

Hierarchical span tree and activity timeline for multi-agent workflows. Each agent gets its own swim lane with duration bars. Auto-captures agent boundaries and handoffs from OpenAI Agents SDK. Thread view for multi-turn conversations.

init()@span() decoratorEvery Claude Code session. Automatically captured.

One command installs hooks. Every prompt, tool call, and file edit is recorded. No code changes needed. Also works with Cursor and Windsurf via MCP.

Integration flow

26 MCP Tools

Debug without leaving your editor

Ask your AI assistant to inspect a failed session, diff two timelines, or run an eval suite. All from within your IDE, no context switching.

# One-command setup

$ rewind hooks install

# Start the dashboard

$ rewind web --port 4800

# Sessions appear automatically

# at http://127.0.0.1:4800Ship with confidence. Catch regressions in CI.

Create datasets, run your agent against them, score with 7 evaluator types, compare experiments side-by-side. Block merges when quality drops.

7 Evaluator Types

Built for CI/CD

- CI-ready with --fail-below thresholds

- GitHub Action for regression testing

- Side-by-side experiment comparison

- Per-example regression detection

- JSON output for dashboard ingestion

- Versioned datasets with deduplication

result = rewind_agent.evaluate(

dataset="booking-tests",

target_fn=my_agent,

evaluators=["exact_match", llm_judge],

fail_below=0.9,

)See it in action

A full debug cycle in under 60 seconds. Record, inspect, fork, diff.

The demo runs with built-in sample data. No OpenAI key, no cloud account, nothing to configure.

Watch the replay counter. Steps before the fork point return instantly from cache. Zero tokens, zero cost.

All data in a SQLite database on your machine. The entire tool is one binary. Nothing phones home.

Try it yourself, no API keys needed:

rewind demo && rewind inspect latest

Works with your stack

Already using Langfuse, LangSmith, or Datadog? You don't have to choose. Rewind works alongside them.

LLM Providers

Agent Frameworks

Autogen, smolagents, custom code, any language

Observability stack

Import traces in, export sessions out, or dual-ship to both.

Start debugging in 30 seconds

One install. One line of code. Zero config.

$ pip install rewind-agent

# Add one line to your agent:

import rewind_agent

rewind_agent.init()

# Run your agent, then inspect the session:

$ rewind show latest

# Or try the built-in demo (no API keys):

$ rewind demo && rewind inspect latest